They ask the language model first and hope the answer sounds right.

Nature solved this problem millions of years ago.

In a beehive, a discovery does not become a decision because one actor says so. A scout returns to the hive and dances a figure-eight on the vertical comb surface — the angle of the straight section tells direction, the duration tells distance, the vigor tells quality. But the dance is not a monologue. More experienced sisters follow the dancer, touch her with their antennae, and give feedback in real time. A stop signal can halt the dance entirely. Only when the message survives community scrutiny does a route worth committing to emerge.

WaggleDance is built on this logic.

It does not hand the problem directly to an LLM. It routes it first to the right solver, verifies the result through multiple agents, and uses a language model only when it genuinely helps. Every step leaves an auditable trace. Every solution is justifiable. Every cycle grows the system’s own expertise.

The figure-eight dance became algorithmic routing. The honeycomb became the MAGMA memory architecture. And the bees’ overnight rest became Dream Mode — a simulation where the system reviews the day’s failures, tests thousands of alternative paths, and returns in the morning wiser.

This is not a metaphor. This is an architecture for collective machine intelligence.

Download, fork, and run locally right away. The entire repo is available on GitHub without registration.

License model: Apache 2.0 + BUSL 1.1 (open core + source-available protected modules). Check the terms on GitHub.

BUSL module change date: March 18, 2030.

Solvers go first. The verifier checks. The LLM only joins when the right solver isn’t enough.

MAGMA records decisions, sources, replays, and trust scores. See what happened, why, and in what order.

Dream Mode reviews failures, simulates better routes, and builds better models for the next day.

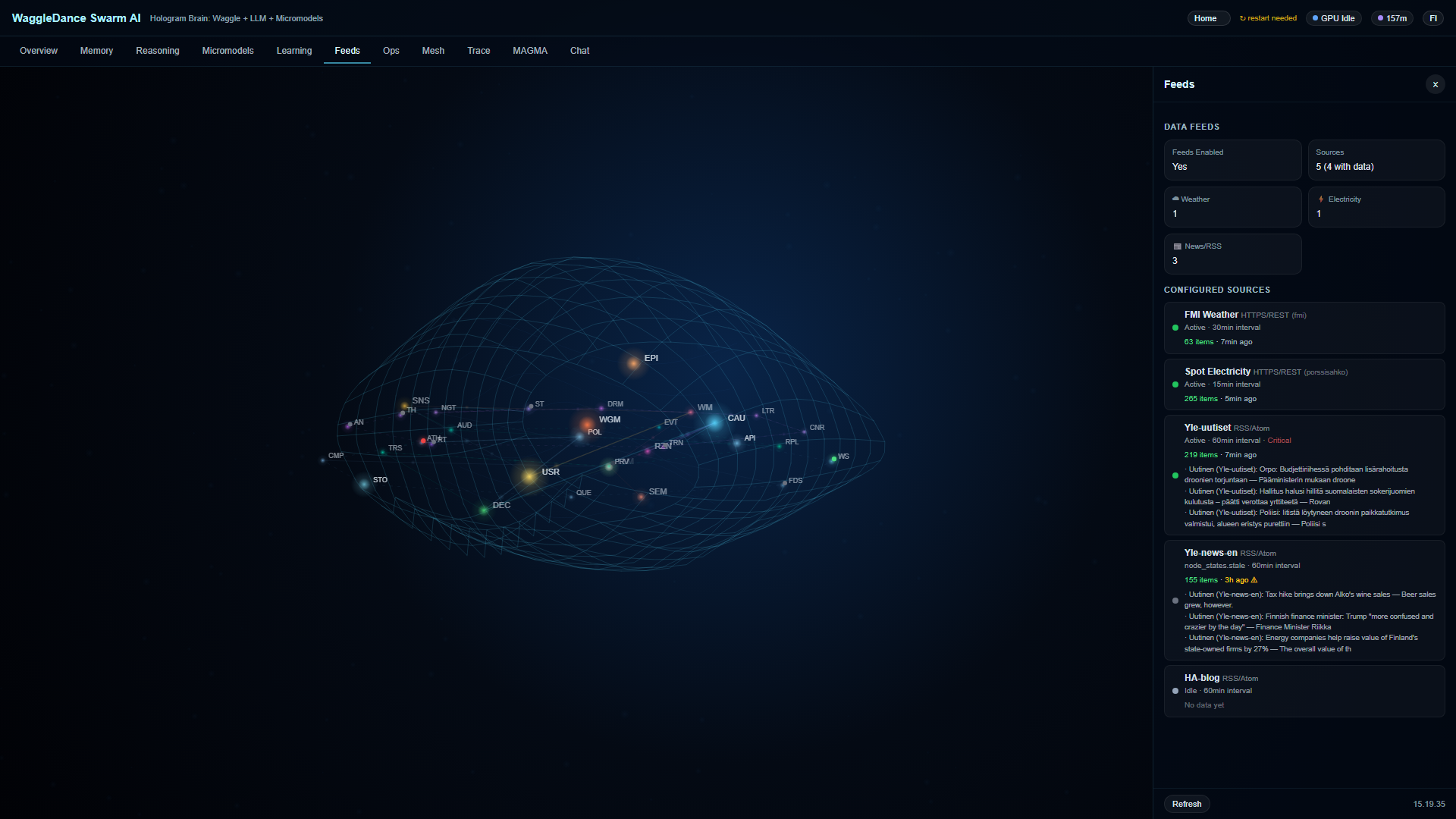

Hologram Brain makes the state of 32 nodes visible in real time. You’re not watching a black box — you’re watching a working system.

Everything runs in your own environment. No mandatory cloud, no prompt data leaving, no SaaS dependency.

The same codebase works from Raspberry Pi to factory profile. Not just a demo, not just a framework.

RPi, edge, sensor

Offline, intermittent connection

Local automation

Monitoring, anomalies, audit

Dashboard and Hologram Brain are available immediately after startup. First response speed depends on profile, hardware, and whether full or stub mode is used.

The prompt is sent directly to Grok — and copied to your clipboard as backup

Grok opens in a new tab with the prompt ready

You get a comprehensive analysis of the repo, a scored competitor comparison, and a factory readiness assessment

If pre-fill doesn’t work, paste manually — the prompt is already on your clipboard.

You can also use the same prompt in Claude, ChatGPT, or any other LLM. Grok is the default choice on this page.

When you click “Analyze Repository”, the AI performs a deep analysis covering:

Main branch, architecture, modules, and latest commits

What is implemented vs. what is planned or aspirational

Test coverage, practical maturity, and production readiness

Memory model, audit architecture, provenance, and trust mechanisms

Scored 1-10 on six axes vs Home Assistant, Node-RED, n8n, Open WebUI, LangGraph, AutoGen, CrewAI, Ollama

Industrial use cases, risks, missing integrations, deployment blockers

Click a prompt to copy it. Paste into your Grok session for deeper exploration.

Choose a profile and get a tailored deployment guide from Grok.

Each tool below is good at what it does. The comparison is meant to show how WaggleDance’s solver-first architecture differs — not to claim others are bad.

clone → docker compose up -d — Ollama, Voikko (Finnish NLP), and the app all in one.No competitor improves autonomously over time. WaggleDance is the only one that builds cumulative expertise.

| Time | WaggleDance | Home Assistant | LangGraph | AutoGen/CrewAI | Node-RED/n8n | Ollama |

|---|---|---|---|---|---|---|

| Day 1 | LLM fallback ~30-50%, solvers learning | Same as always | Same as always | Same as always | Same as always | Same as always |

| Month 1 | HotCache fills, LLM ~20-30%, first canary promotions | No change | No change | No change | No change | No change |

| Month 6 | LLM ~10-15%, specialists maturing, ~180 nights of Dream Mode | No change | No change | No change | No change | No change |

| Year 1 | LLM ~5-8%, MAGMA with thousands of audited paths | No change | No change | No change | No change | No change |

| Year 2 | LLM <3-5%, >95% deterministic, TCO a fraction of day 1 | No change | No change | No change | No change | No change |

The competitors' column is empty everywhere except day 1. They don't learn. They don't improve. On day 730, they are exactly the same as on day 1.

Yes. Download and run immediately. Apache 2.0 parts are freely usable. Non-commercial personal use of BUSL-protected modules is permitted. For commercial use, check the license terms on GitHub.

No. WaggleDance is designed to work fully offline on local hardware. Internet is only needed for initial setup and updates.

Minimum: Raspberry Pi 4 or equivalent (GADGET profile). Recommended: modern x86 server for multi-agent orchestration (FACTORY profile).

You get a quick second technical opinion on the public repo, documentation, and competitive landscape. You can use the same prompt in Claude, ChatGPT, or any other LLM.

An auditing and provenance framework. Every agent decision is recorded so you get traceability, replay, and trust assessment visibility.

An overnight learning mode where the system reviews the day's failures, simulates better routes, and builds better models for the next day — automatically without user action.

Dashboard and Hologram Brain are available immediately. First response speed depends on profile and hardware.