Problema directe ad linguae exemplar mittunt et sperant responsum rectum fore.

Natura hanc quaestionem ante milliones annorum solvit.

In colonia apum, inventio non fit decisio quia una apis decernit. Apis exploratrix ad alvum redit et choream octavariam in favo verticali saltat — angulus directionem indicat, longitudo distantiam, vigor qualitatem. Sed chorea non est monologus. Apes sorores ducem sequuntur, antennis tangunt, et responsum in tempore reali praebent. Signa stationis choream totaliter prohibere possunt. Solum cum nuntius inspectionem socialem superat, via digna est ire.

WaggleDance hac logica aedificatur.

Problema non directe ad LLM dat. Primum ad rectum solutorem dirigit, per plures agentes resultatum validat, et exemplaribus linguae utitur solum cum vere auxiliantur. Quisque gradus vestigium sequibile relinquit.

Chorea octavaria facta est algorithmica directio. Favus factus est structura memoriae MAGMA. Et somnus nocturnus apum factus est Dream Mode — simulatio ubi systema defectus diei recensit, milia viarum alternarum probat et sapientius evigilat.

Hoc non est metaphora. Hoc est ingenium intelligentiae collectivae.

Depone, furca et statim localiter curre. Totum depositorium in GitHub sine registratione paratum est.

Licentia: Apache 2.0 + BUSL 1.1. Vide terminos in GitHub.

Dies mutationis BUSL: 18 Martii 2030.

Solutores primum eunt. Validator verificat. LLM intrat solum cum rectus solutor non sufficit.

MAGMA decisiones, fontes, replay et gradus fidei registrat. Vide quid factum sit, cur, et quo ordine.

Dream Mode defectus recenset, meliores vias simulat et meliora exemplaria in diem sequentem aedificat.

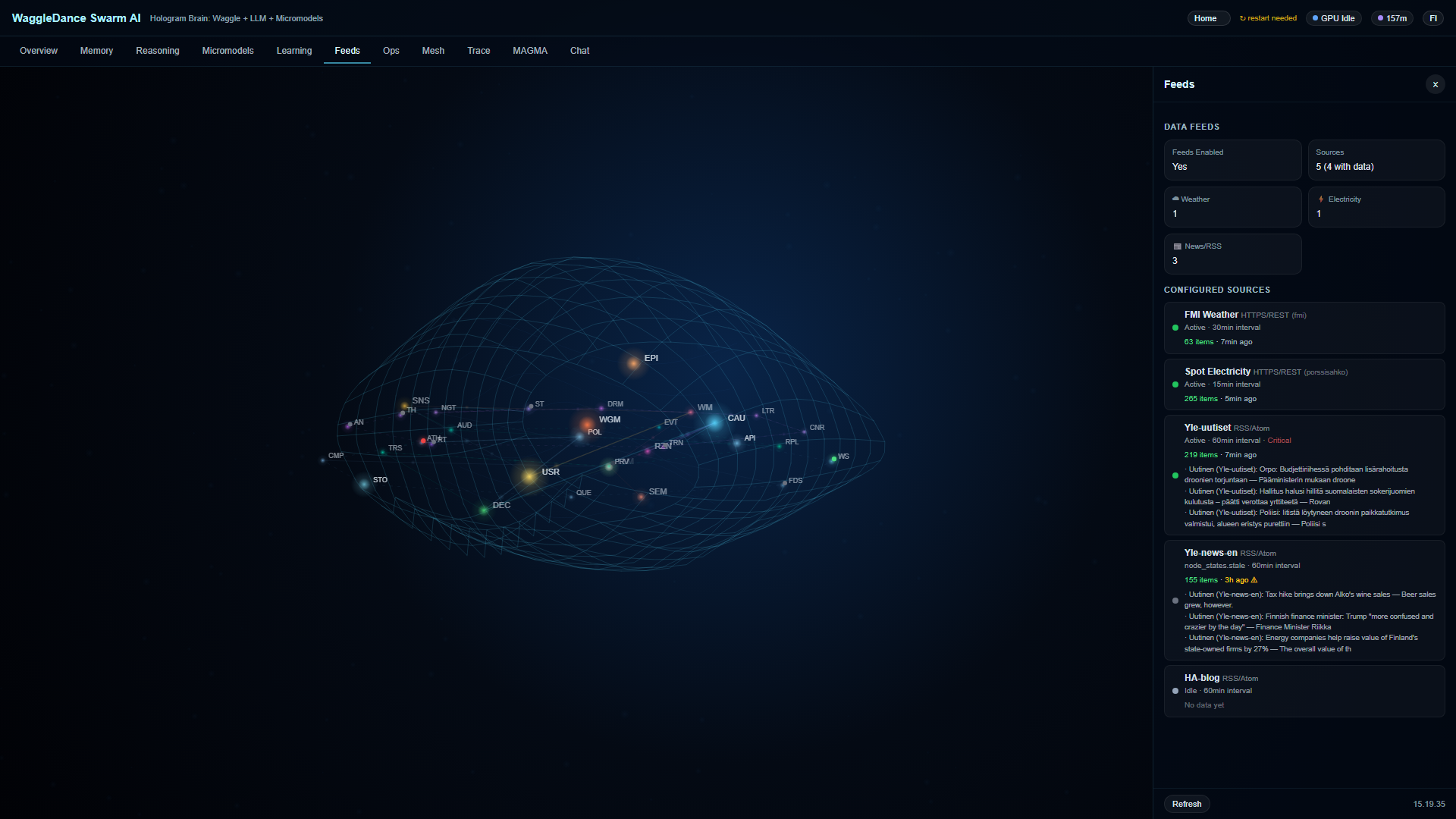

Hologram Brain statum 32 nodorum tempore reali visibilem facit. Non arcam nigram spectas — sed systema operans, quod explicat quid agat et cur.

Omnia in proprio ambitu tuo currunt. Nulla nubes obligatoria, nulla data promptorum exeunt, nulla dependentia SaaS.

Idem codex a Raspberry Pi usque ad profilum fabricae operatur.

RPi, margo, sensor

Sine rete, connexio intermittens

Automatio domestica

Monitoratio, anomaliae, auditus

Dashboard et Hologram Brain statim post initium parata sunt. Celeritas primae responsionis a profilo, apparatu, atque modo pleno vel stub pendet.

Incitamentum directe ad Grok mittitur — et in tuo clipboardio ut tergum servatur

Grok in nova scheda cum incitamento parato aperitur

Analysem profundam depositorii, comparationem notatam cum competitoribus, et aestimationem industrialem accipis

Si praeimpletio non operatur, manualiter aggluta — incitamentum iam in clipboardio tuo est.

Eadem instructione in Claude, ChatGPT vel quolibet alio LLM uti potes. Grok optio praedefinita in hac pagina est.

Cum clicas “Analyse depositorium”, systema profundam analysem exsequitur:

Ramus principalis, structura, moduli et commissiones recentes

Quid exsecutum est contra quid planificatum vel aspiratum est

Tegumentum probationum, maturitas practica et paratio ad productionem

Exemplar memoriae, architectura auditūs, provenientia et machinae fiduciae

Notae 1-10 in sex axibus contra Domus Assistant, Node-RED, n8n, Open WebUI, LangGraph, AutoGen, CrewAI, Ollama

Usus industriales, pericula, connectores absentes, limites dispositionis

Clica incitamentum ut copies. In sessione Grok aggluta pro investigatione profundiore.

Elige profilum et dispositionem personalem a Grok accipe.

Quodque instrumentum infra bonum est in quod facit. Comparatio ostendit quomodo architectura solver-first WaggleDance diversa est — non quod alii mali sint.

clone → docker compose up -d — Ollama, Voikko (Finnish NLP), and the app all in one.No competitor improves autonomously over time. WaggleDance is the only one that builds cumulative expertise.

| Time | WaggleDance | Domus Assistant | LangGraph | AutoGen/CrewAI | Node-RED/n8n | Ollama |

|---|---|---|---|---|---|---|

| Day 1 | LLM fallback ~30-50%, solvers learning | Same as always | Same as always | Same as always | Same as always | Same as always |

| Month 1 | HotCache fills, LLM ~20-30%, first canary promotions | No change | No change | No change | No change | No change |

| Month 6 | LLM ~10-15%, specialists maturing, ~180 nights of Dream Mode | No change | No change | No change | No change | No change |

| Year 1 | LLM ~5-8%, MAGMA with thousands of audited paths | No change | No change | No change | No change | No change |

| Year 2 | LLM <3-5%, >95% deterministic, TCO a fraction of day 1 | No change | No change | No change | No change | No change |

The competitors' column is empty everywhere except day 1. They don't learn. They don't improve. On day 730, they are exactly the same as on day 1.

Yes. Download and run immediately. Apache 2.0 parts are freely usable. Non-commercial personal use of BUSL-protected modules is permitted. For commercial use, check the license terms on GitHub.

No. WaggleDance is designed to work fully offline on local hardware. Internet is only needed for initial setup and updates.

Minimum: Raspberry Pi 4 or equivalent (GADGET profile). Recommended: modern x86 server for multi-agent orchestration (FACTORY profile).

You get a quick second technical opinion on the public repo, documentation, and competitive landscape. You can use the same prompt in Claude, ChatGPT, or any other LLM.

An auditing and provenance framework. Every agent decision is recorded so you get traceability, replay, and trust assessment visibility.

An overnight learning mode where the system reviews the day's failures, simulates better routes, and builds better models for the next day — automatically without user action.

Dashboard and Hologram Brain are available immediately. First response speed depends on profile and hardware.