Wọ́n kọ́kọ́ béèrè lọ́wọ́ awoṣe èdè, wọ́n sì ń retí pé ìdáhùn náà yóò dà bíi pé ó tọ́.

Ìṣẹ̀dá ti yanjú ìṣòro yìí ní ọ̀pọ̀ mílíọ̀nù ọdún sẹ́yìn.

Nínú ilé oyin, iwari kò di ìpinnu nítorí olùdarí kan ní bẹ́ẹ̀ni. Olùwádìí yóò padà sí ilé oyin yóò sì jó ìpò méjèjì lórí ojú oyin tó dúró — ìgun ti apákan taara yìí ń fi ìtàn hàn, gígùn àkókò ń fi àyè hàn, agbára ń fi dídára hàn. Ṣùgbọ́n ìjó yìí kìí ṣe ọ̀rọ̀ ọ̀kan nìkan. Àwọn oyin obìnrin yóò tẹ̀lé olùjó, wọ́n yóò fi eriali wọn fọwọ́kan, yóò sì fún ní ẹ̀sì ní àkókò gidi. Àwọn àmì ìdúró lè da ìjó náà dúró pátápátá. Nìkan nígbà tí ìfiranṣẹ́ bá wà láyè lẹ́yìn àyẹ̀wò àgbègbè ni ọ̀nà tó yẹ kí ó tẹ̀lé farahàn.

WaggleDance ni a kọ́ lórí ọgbọ́n yìí.

Kò fi ìṣòro ránṣẹ taara sí LLM. Lákọ̀ọ́kọ́ yóò fi í ránṣẹ sí olùdásílẹ̀ tó tọ́, ṣe ìdánilójú àbájáde nípasẹ̀ àwọn aṣojú púpọ̀, yóò sì lò àwọn awoṣe èdè nìkan nígbà tí wọn bá ran gan. Gbogbo ìgbésẹ̀ fi ipa ọ̀nà àyẹ̀wò sílẹ̀. Gbogbo ìṣòro lè jẹ́rí. Gbogbo ìgbàkúgbà ń kọ ìmọ̀ ti ara ẹni ti ètò.

Ìjó ìpò méjèjì di routing algorithmic. Ojú oyin di ètò ìrántí MAGMA. Àti ìsinmi alẹ̀ àwọn oyin di Dream Mode — simulation nínú èyí tí ètò ṣe àtúnwò àwọn ìkùnà ọjọ́ náà, daakọ ẹgbẹẹgbẹ̀rún àwọn ọ̀nà míràn, yóò sì jí ní ọgbọ́n díẹ̀ síi.

Èyí kì í ṣe àfiwé. Èyí jẹ́ architecture fún ìmòye ẹrọ àpapọ̀.

Gba, fork, ki o si ṣiṣẹ ni agbegbe lẹsẹkẹsẹ. Gbogbo repo wa lori GitHub laisi iforukọsilẹ.

License model: Apache 2.0 + BUSL 1.1 (open core + source-available protected modules). Check the terms on GitHub.

BUSL module change date: March 18, 2030.

Solvers ni wọ́n kọ́kọ́ ṣiṣẹ́. Verifier ń ṣàyẹ̀wò. LLM máa ń wọlé nígbà tí solver tó tọ́ kò bá tó nìkan.

MAGMA ń ṣe àkọsílẹ̀ àwọn ìpinnu, àwọn orísun, replays, àti trust scores. Wo ohun tó ṣẹlẹ̀, ìdí rẹ̀, àti bí ó ṣe tẹ̀ léra.

Dream Mode ṣe atunwo awọn ikuna, ṣe afarawe awon ọna ti o dara, ati kọ awon awoṣe to dara fun ọjọ ti nbọ.

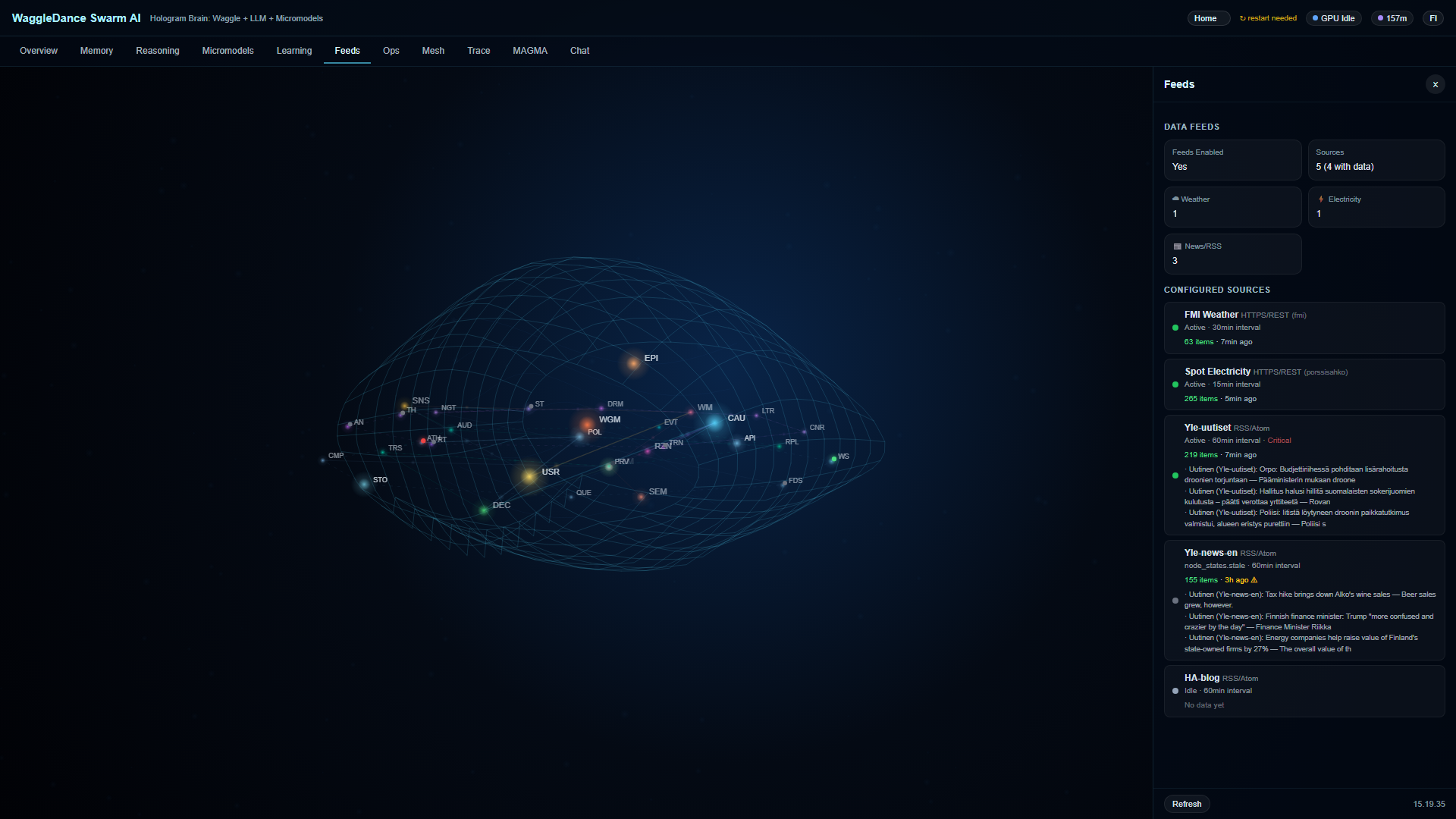

Hologram Brain mú kí ipò àwọn node 32 hàn ní real time. Ìwọ kì í wo black box — o ń wo ètò tó ń ṣiṣẹ́.

Gbogbo nkan n ṣiṣẹ ni agbegbe ara rẹ. Ko si awọsanma ti a fi agbara mu, ko si data prompt ti o jade, ko si igbẹkẹle SaaS.

Kode kanna n ṣiṣẹ lati Raspberry Pi si profaili ile-iṣẹ. Kii ṣe demo nikan, kii ṣe eto nikan.

RPi, edge, sensor

Offline, asopọ ti ko duro

Automation agbegbe

Ibojuwo, awon airotele, ayewo

Dashboard àti Hologram Brain wà lẹ́sẹ̀kẹsẹ̀ lẹ́yìn ìbẹ̀rẹ̀. Ìyára ìdáhùn àkọ́kọ́ dá lórí profaili, hardware, àti bóyá full tàbí stub mode ni a ń lò.

Prompt ni a firanṣẹ taara si Grok — ati daakọ si clipboard rẹ gẹgẹ bi atilẹyin

Grok ṣi ni taabu tuntun pẹlu prompt ṣetan

Ìwọ yóò gba ìtúpalẹ̀ kíkún ti repo, afíwéra olùdíje tí a fún ní score, àti ìtòlẹ́sẹẹsẹ ìmúrasílẹ̀ ilé-iṣẹ́.

Ti pre-fill ko ba ṣiṣẹ, fi si ọwọ — prompt wa lori clipboard rẹ tẹlẹ.

O tun le lo prompt kanna ni Claude, ChatGPT, tabi LLM miiran. Grok ni aṣayan aiyipada lori oju-iwe yii.

Nígbà tí o bá tẹ “Ṣe ìtúpalẹ̀ Repository”, AI máa ṣe ìtúpalẹ̀ jinlẹ̀ tó kún fún:

Eka akọkọ, ọna ṣiṣe, awọn module, ati awọn commit to ṣẹṣẹ

Kini a ti ṣe vs kini a ngbero tabi aspirational

Agbegbe idanwo, idagbasoke to wulo, ati imurasilẹ iṣelọpọ

Ẹya iranti, ọna ayẹwo, orisun, ati awọn ilana igbẹkẹle

A fún un ní àmì 1-10 lórí axes mẹ́fà ní fífi wé Ile Assistant, Node-RED, n8n, Open WebUI, LangGraph, AutoGen, CrewAI, Ollama

Awọn ọran lilo ile-iṣẹ, ewu, awọn isopọmọra ti o padanu, awọn idena gbigbejade

Tẹ prompt lati daakọ. Fi sinu igba Grok rẹ fun iwadi jinlẹ.

Yan profaili ki o si gba itọnisọna gbigbejade ti a ṣe adapa lati Grok.

Gbogbo irinṣẹ́ tó wà ní ìsàlẹ̀ dára nínú ohun tí ó ń ṣe. Afíwéra náà ń fi hàn bí solver-first architecture ti WaggleDance ṣe yàtọ̀ — kì í ṣe láti sọ pé àwọn míì burú.

clone → docker compose up -d — Ollama, Voikko (Finnish NLP), and the app all in one.No competitor improves autonomously over time. WaggleDance is the only one that builds cumulative expertise.

| Time | WaggleDance | Ile Assistant | LangGraph | AutoGen/CrewAI | Node-RED/n8n | Ollama |

|---|---|---|---|---|---|---|

| Day 1 | LLM fallback ~30-50%, solvers learning | Same as always | Same as always | Same as always | Same as always | Same as always |

| Month 1 | HotCache fills, LLM ~20-30%, first canary promotions | No change | No change | No change | No change | No change |

| Month 6 | LLM ~10-15%, specialists maturing, ~180 nights of Dream Mode | No change | No change | No change | No change | No change |

| Year 1 | LLM ~5-8%, MAGMA with thousands of audited paths | No change | No change | No change | No change | No change |

| Year 2 | LLM <3-5%, >95% deterministic, TCO a fraction of day 1 | No change | No change | No change | No change | No change |

The competitors' column is empty everywhere except day 1. They don't learn. They don't improve. On day 730, they are exactly the same as on day 1.

Yes. Download and run immediately. Apache 2.0 parts are freely usable. Non-commercial personal use of BUSL-protected modules is permitted. For commercial use, check the license terms on GitHub.

No. WaggleDance is designed to work fully offline on local hardware. Internet is only needed for initial setup and updates.

Minimum: Raspberry Pi 4 or equivalent (GADGET profile). Recommended: modern x86 server for multi-agent orchestration (FACTORY profile).

You get a quick second technical opinion on the public repo, documentation, and competitive landscape. You can use the same prompt in Claude, ChatGPT, or any other LLM.

An auditing and provenance framework. Every agent decision is recorded so you get traceability, replay, and trust assessment visibility.

An overnight learning mode where the system reviews the day's failures, simulates better routes, and builds better models for the next day — automatically without user action.

Dashboard and Hologram Brain are available immediately. First response speed depends on profile and hardware.