Most diviners ask the lone oracle first and trust whatever sound it makes.

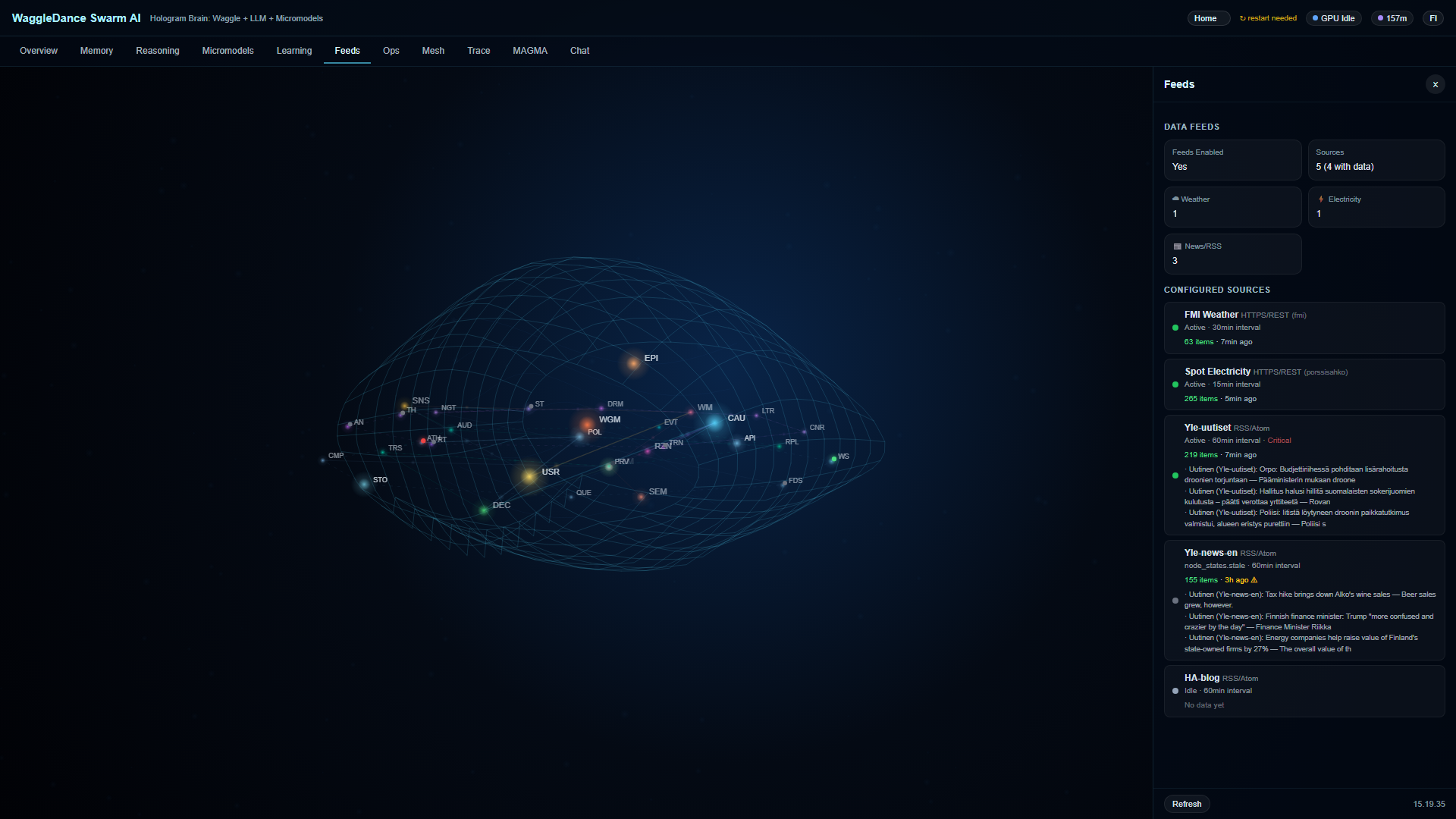

Hologram Brain — the seeing-vessel of 32 cooperating agents, live

But the Anunnaki of old solved this: no single god rules — the assembly decides.

In the great hall, a discovery becomes decision not because one god proclaims it. The scout-bee returns and dances upon the comb-tablet — the angle reveals the path, the vigor the worth, the duration the distance. Yet the dance is no monologue. Wiser sisters touch her with antennae, give counsel in real time. A halt-sign can stop the dance entirely. Only when the message survives the council's scrutiny does a path worth following emerge.

WaggleDance is built upon this ancient logic.

It does not surrender the question to a single LLM. It routes first to the right solver, verifies through many agents, and calls upon the language-god only when truly needed. Every step leaves a record on the MAGMA tablet — 𒂗𒆠 𒁾 (en-ki dub) — unalterable. Every answer is justified. Every cycle deepens the system's wisdom.

The figure-eight dance became algorithmic routing. The honeycomb became the MAGMA memory architecture — 𒈹 𒂗 𒀭 (inanna en an, the seeing-record of heaven). And the bees' nightly rest became Dream Mode — a time when the system reviews the day's failures, tests thousands of paths, and rises wiser at dawn.

This is not a metaphor. This is the architecture of collective machine intelligence — 𒈨 𒂗 (me en, the divine decrees of the lord).

Clone, fork, and run upon thine own hearth. The full repository is upon GitHub without registration.

Apache 2.0 + BUSL 1.1 (open core + source-available protected modules). GitHub.

BUSL transition: 18 March 2030.

Solvers labor first. The verifier examineth. The LLM entereth only when no proper solver suffices — 𒂗𒆠 (en-ki, the path of wisdom).

MAGMA inscribes decisions, sources, replays, and trust scores upon the eternal tablet — 𒁾 (dub). See what hath happened, why, and in what order.

Dream Mode reviews the day's failures, tests thousands of paths, and at dawn promoteth the better way — like Inanna who descends and ascends.

Hologram Brain showeth the state of 32 cooperating agents in real time. No black box — only the open council of An.

All runneth within thine own walls. No mandatory cloud, no prompt-data departing, no SaaS dependence.

From Raspberry Pi to entire factory. Without lessening the spirit, without demanding the heaven of clouds.

RPi, gadget, sensor

Offline, intermittent connection

House automation

Monitoring, anomalies, audit

The same Hologram Brain showeth all the places.

A decree (prompt) for Grok — copied to thy hand

Grok openeth and beginneth the analysis instantly

Repository examination, competitor comparison, large LLM comparison

If a thing turneth not — paste the decree in the Grok dialog (Ctrl+V).

Useth thou Claude, ChatGPT, or another oracle? The decree functioneth there as well. Grok is merely the most fluent.

When thou clickest “Examine the Repository” — the AI inspecteth thoroughly:

Code-base, modules, line-counts, tested-amount

What the docs promise versus what the code doth

Test coverage, robustness, ratio of features and roadmap

Memory model, audit architecture, provenance, trust mechanisms

Scored 1–10 on six axes vs. House Assistant, Node-RED, n8n, Open WebUI, LangGraph, AutoGen, CrewAI, Ollama

Industrial profile, OPC-UA, MAGMA compliance, Dream Mode for night shifts

Click any decree to copy it. Then paste into Grok.

Choose thy profile and let Grok prepare a deployment plan tailored for thee.

All systems have their own strengths. Below the differences, line by line. The judgment of the council — not the proclamation of one god.

clone → docker compose up -d — Ollama, Voikko (Finnish NLP), and the app all in one.No competitor improves autonomously over time. WaggleDance is the only one that builds cumulative expertise.

| Time | WaggleDance | House Assistant | LangGraph | AutoGen/CrewAI | Node-RED/n8n | Ollama |

|---|---|---|---|---|---|---|

| Day 1 | LLM fallback ~30-50%, solvers learning | Same as always | Same as always | Same as always | Same as always | Same as always |

| Month 1 | HotCache fills, LLM ~20-30%, first canary promotions | No change | No change | No change | No change | No change |

| Month 6 | LLM ~10-15%, specialists maturing, ~180 nights of Dream Mode | No change | No change | No change | No change | No change |

| Year 1 | LLM ~5-8%, MAGMA with thousands of audited paths | No change | No change | No change | No change | No change |

| Year 2 | LLM <3-5%, >95% deterministic, TCO a fraction of day 1 | No change | No change | No change | No change | No change |

The competitors' column is empty everywhere except day 1. They don't learn. They don't improve. On day 730, they are exactly the same as on day 1.

Yes. Apache 2.0 + BUSL 1.1. The full repository is on GitHub.

Yea. The AI runneth on thine own hardware.

Raspberry Pi 4 (gadget profile). x86 (workshop profile).

An external oracle that examineth thy code. Claude, ChatGPT, or any large LLM also functioneth.

An audit-tablet — 𒁾 — records every decision with provenance and trust scores.

The AI reviseth itself by night. Tests thousands of paths and promoteth the better at dawn — like Inanna's descent and return.

Hologram Brain showeth the state of 32 agents in real time.